102

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

this post was submitted on 14 Nov 2024

102 points (96.4% liked)

Programmer Humor

19821 readers

1147 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 2 years ago

MODERATORS

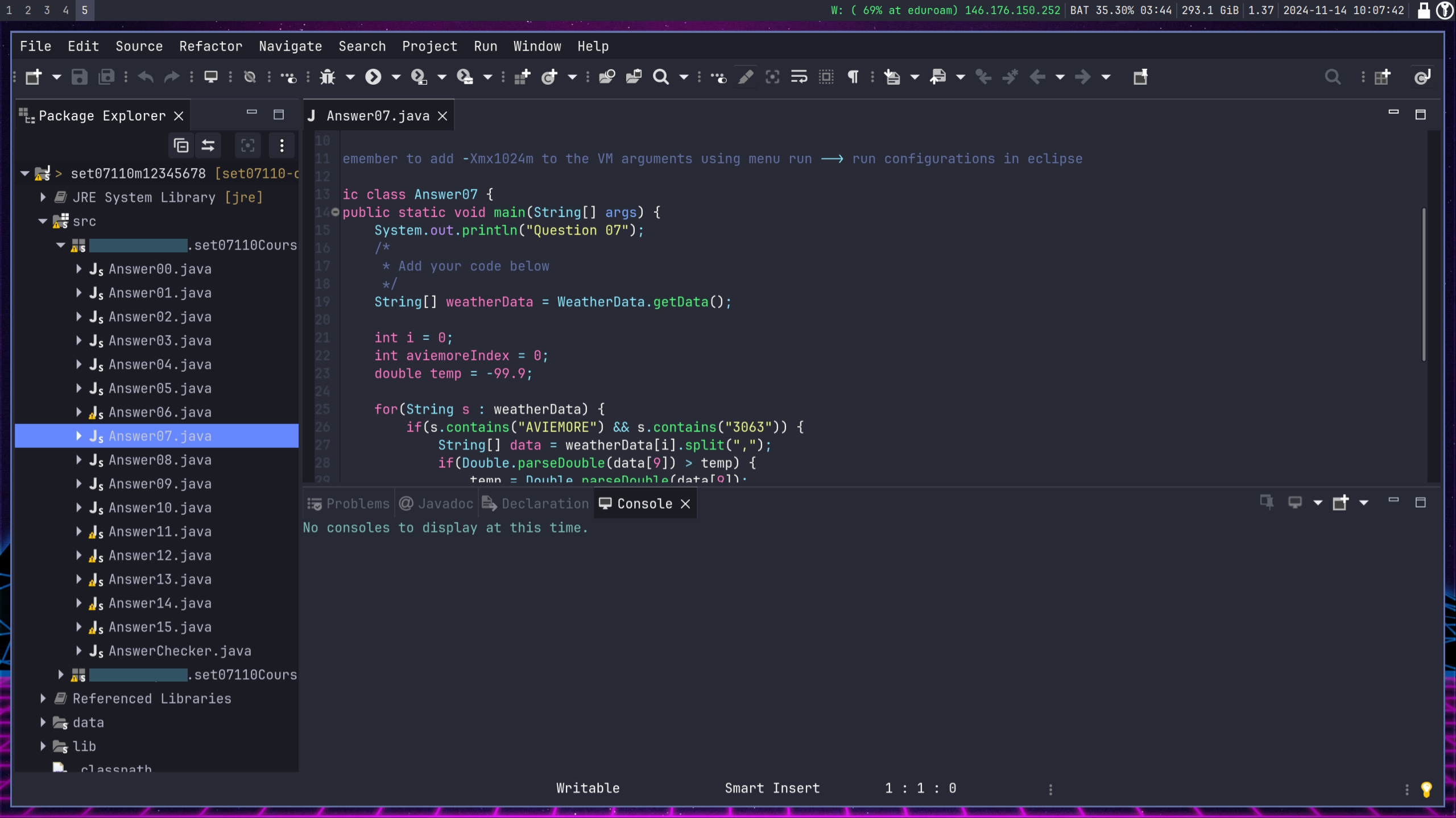

Most of my college coursework was around OOP. That said, they actually did a pretty lousy job of explaining it in a practical sense, so since we were left to figure it out ourselves a lot of our assignments ended up looking like this.

At the end of the program, our capstone project was to build a full stack app. They did a pretty good job simulating a real professional experience: we all worked together on requirements gathering and database design, then were expected to build our own app.

To really drive home the real world experience, the professor would change the requirements partway through the project. Which is a total dick move, but actually a good lesson on its own.

Anyway, this app was mostly about rendering a bunch of different views, and something subtly would change that ended up affecting all views. After the fact, the professor would say something to the effect of "If you used good objects, you'll only have to make the change in one place."

This of course is only helpful if you really appreciated the power of OOP and planned it from the start. Most of us were flying by the seat of our pants, so it was usually a ton of work to get those changes in

IMO that's generally a retroactive statement because in practice have to be lucky for that to be true. An abstraction along one dimension -- even a good one, will limit flexibility in some other dimension.

Occasionally, everything comes into alignment and an opportunity appears to reliably-ish predict the correct abstraction the future will need.

Most every other time, you're better off avoiding the possibility of the really costly bad abstraction by resisting the urge to abstract preemptively.

Generally true, but if the professor in this context was not a moron, he probably mentioned at the start of the class that he would be forcing a change to requirements part way through the course. Ideally, he would've specified what kind of changes this would be, in order for the students to account for that in their design. I think it's likely this happened, but the student was lacking so much experience he didn't understand that hint or what he needed to do in the design in order to later swap parameters more easily. I'm going to withhold judgement on this professor having only heard a biased account. It could've been a very good assignment, now being told from the perspective of a mediocre student.

I wasn't aware my mediocrity was on display. 😅

Honestly, I liked the professor. When he had time to teach something he was clearly interested in, he did a great job of connecting. He didn't get to teach us OOP though because there was a staffing emergency. The person we did get normally taught Hardware, so he was basically just reading aloud the textbook. Poor guy.

And you're right, the professor did let us know that there was going to be a change of requirements partway through. But it wouldn't be a good lesson if he told us what was going to change, although he did give some examples from previous times he'd taught the course.

A lot of people got burned when the change came. For my part I thought I did pretty okay, the refactor didn't go perfectly but it was better than if I hadn't been prepared. But I've also written a bunch of really gross objects that served no purpose just because they might change later. As anything is, it's all about finding that happy medium

That's a fair assessment. It's kind of like the rule for premature optimization: don't.

With experience you might get some intuition about when it's good to lean into inheritance. We were definitely lacking experience at that point though.

OOP is a pretty powerful paradigm, which means it's also easy to provide enough rope to hang yourself with. See also just about any other meme here about OOP

Same, I always remember this with interfaces and inheritance, shoehorned in BS where I'm only using 1 class anyway and talking to 1 other class what's the point of this?

After I graduated as a personal project i made a wiki for a game and I was reusing a lot of code, "huh a parent class would be nice here".

In my first Job, I don't know who's going to use this thing I'm building but these are the rules: proceeds to implement an interface.

When I have to teach these concepts to juniors now, this is how I teach them: inheritance to avoid code duplication, interfaces to tell other people what they need to implement in order to use your stuff.

I wonder why I wasn't taught it that way. I remember looking at my projects that used this stuff thinking it was just messy rubbish. More importantly, I graduated not understanding this stuff...

I wouldn't say that inheritance is for avoiding code duplication. It should be used to express "is a" relationship. An example seen in one of my projects: a mixin with error-handling code for a REST service client used for more than one service has log messages tightly coupled to a particular service. That's exactly because someone thought it was ok to reuse.

In my opinion, inheritance makes sense when you can follow Liskov's principle. Otherwise you should be careful.

You're not wrong but I think when you're teaching someone just having 1 parent and 1 child class makes for a bad example I generally prefer to use something with a lot of different children.

My go-to is exporters. We have the exporter interface, the generic exporter, the accounting exporter and the payroll exporter, to explain it.

At school, the only time I used inheritance was 1 parent (booking) and 1 child (luxury) this is a terrible example imo.

Maybe that example was made terrible because the author couldn't think of a good ways to show how great this can be. I'm obviously a fan of SOLID, and OCP is exactly why I don't worry if I have only one class at the beginning. Because I know eventually requirements would change and I'd end up with more classes.

Some time ago I was asked by a less experienced coworker during a code review why I wrote a particularly complex piece of code instead just having a bunch of if statements. Eventually this piece got extended to do several other things, but because it was structured well, extending it was easy with minimum impact for the code-base. This is why design matters.

Above claims are based on nearly 2 decades of writing software, 3/4 of it in big companies with very complex requirements.

What no, inheritance is not for code sharing

Sound bite aside, inheritance is a higher level concept. That it "shares code" is one of several effects.

The simple, plain, decoupled way to share code is the humble function call, i.e. static method in certain langs.

I mostly come to prefer composition, this approach apparently even has a wiki page. But that's in part because I use Rust that forbids inheritance, and don't have such bullshit (from delegation wiki page):

Why would one substitute

basthiswhen called fromb.ais beyond me, seriously.