Investment giant Goldman Sachs published a research paper

Goldman Sachs researchers also say that

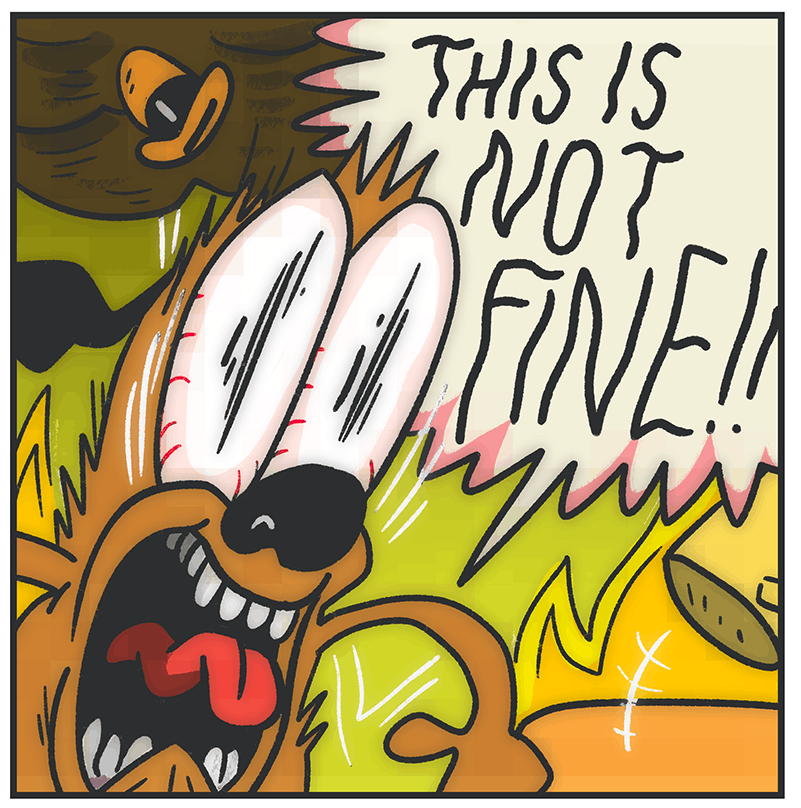

It's not a research paper; it's a report. They're not researchers; they're analysts at a bank. This may seem like a nit-pick, but journalists need to (re-)learn to carefully distinguish between the thing that scientists do and corporate R&D, even though we sometimes use the word "research" for both. The AI hype in particular has been absolutely terrible for this. Companies have learned that putting out AI "research" that's just them poking at their own product but dressed up in a science-lookin' paper leads to an avalanche of free press from lazy credulous morons gorging themselves on the hype. I've written about this problem a lot. For example, in this post, which is about how Google wrote a so-called paper about how their LLM does compared to doctors, only for the press to uncritically repeat (and embellish on) the results all over the internet. Had anyone in the press actually fucking bothered to read the paper critically, they would've noticed that it's actually junk science.

There's the old joke that metalheads are nice people pretending to be mean, while hippies are mean people pretending to be nice.